Good times… I’ve got an XFS file system that I need more space on, and fortunately the partition in question is the last on the disk, and the partition in question is the last in LVM. The system is Vmware vSphere, so no problem there, shut machine down, dial up more disk, boot back up. The original disk was 100 GB in size with ~80GB in /var, the new disk is 420 GB.

First issue; the LVM tools don’t see the partition as being larger. Well, that’s because the partition itself on the physical drive isn’t larger. Okay, so step one is to increase the physical size. This is a GPT partition though, created with parted, so things are a bit different than the fdisk days.

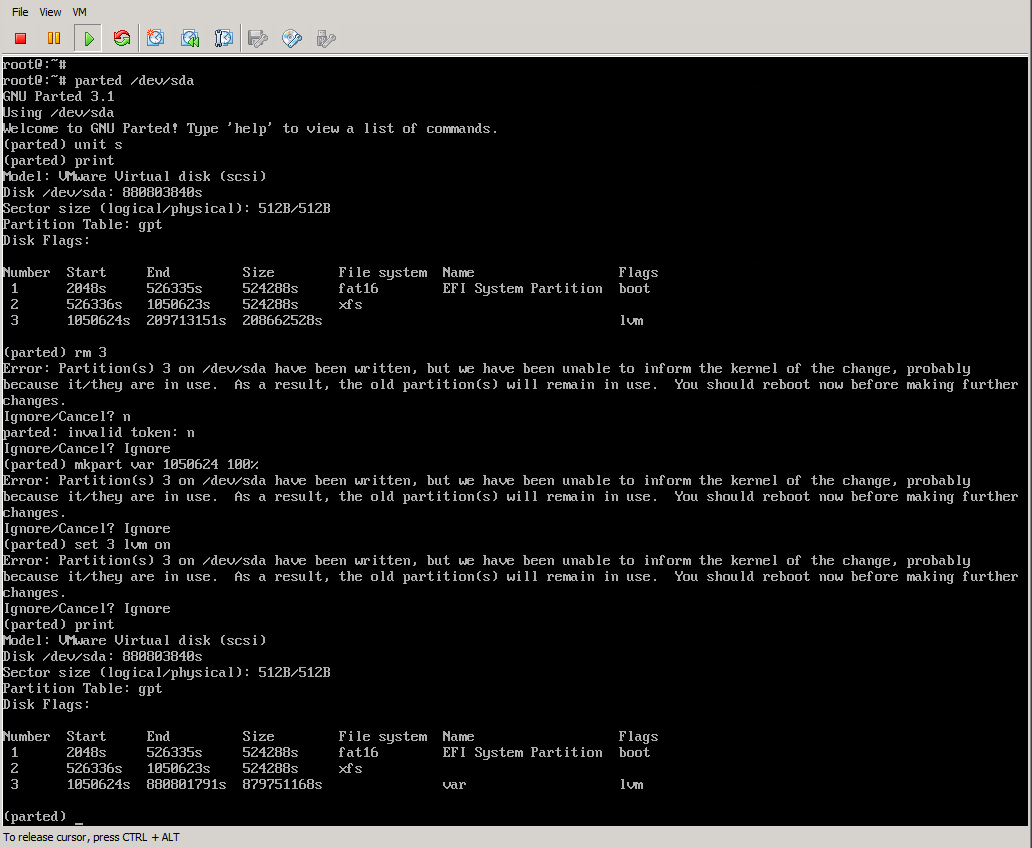

- Start parted

- Set the display units to sectors by typing “unit s”: (parted) unit s

- Type print to display the current setup: (parted) print

- Remove the lvm partition, #3 in my case: (parted) rm 3

- Recreate the partition with the same starting sector, so in my example: (parted) mkpart var 1050624 100%

- Set the LVM flag back to on: (parted) set 3 lvm on

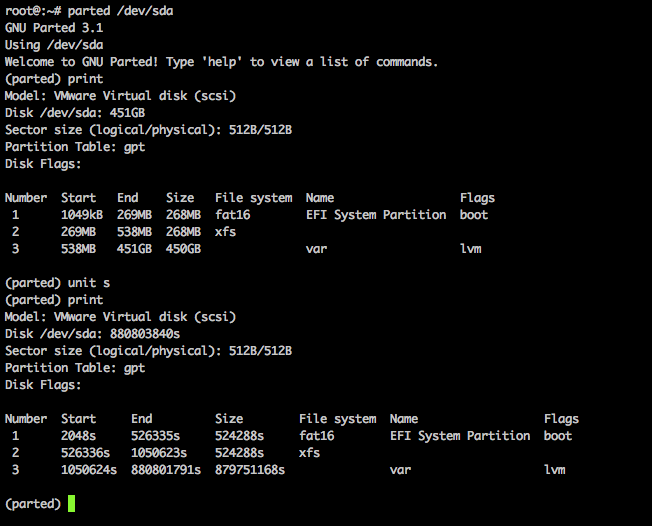

Looking good, reboot time. Post reboot, system comes up, but in the default display mode for parted (megabytes), it seems to think the new disk is 450 GB…:

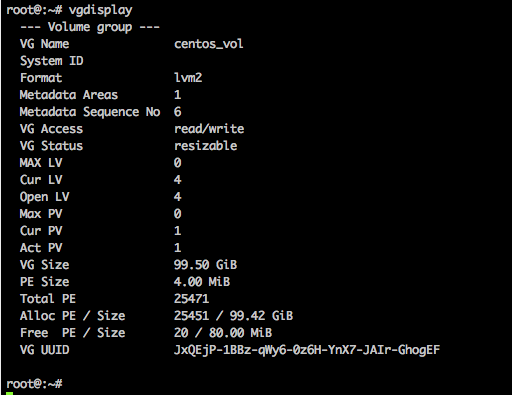

Okay so now we move on to LVM tasks. Let’s see how the volume group looks with vgdisplay:

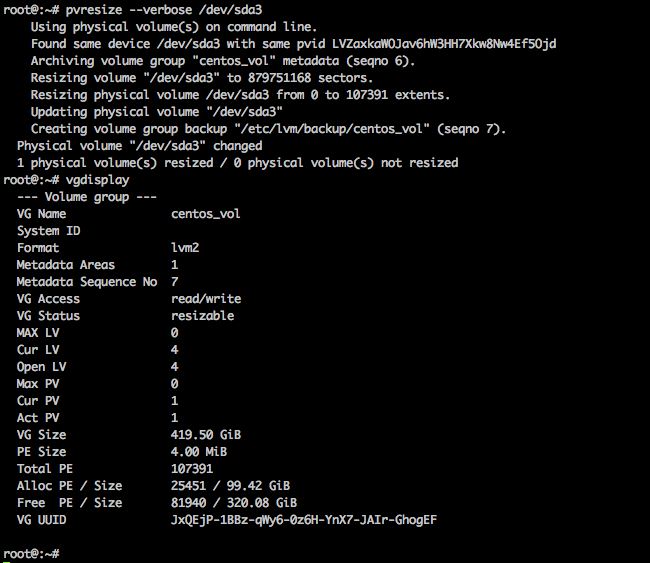

Still showing the 100 GB (99.5gb) volume group size, so obviously it doesn’t automatically take up new space. We’re going to run the pvresize command to tell LVM to grab that new space, then use vgdisplay to show that it did indeed change the volume group size to the ~420 GB I was expecting:

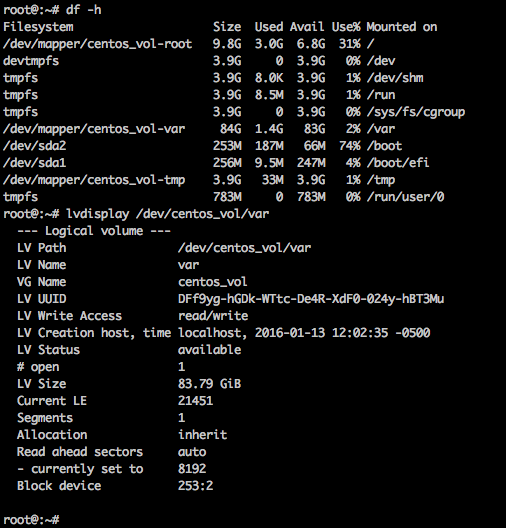

Woohoo, we’re making progress now. Next up is grow the logical volume. /var is still mounted, no problem, and here’s how the volume looks currently:

So let’s run the lvextend command to extend my /var volume (not file system, we’re not there yet) to 100% of free space via: lvextend -l 100%FREE /dev/centos_vol/var

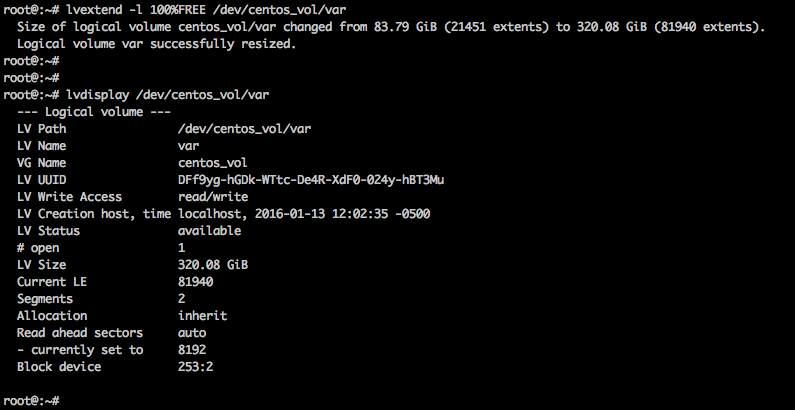

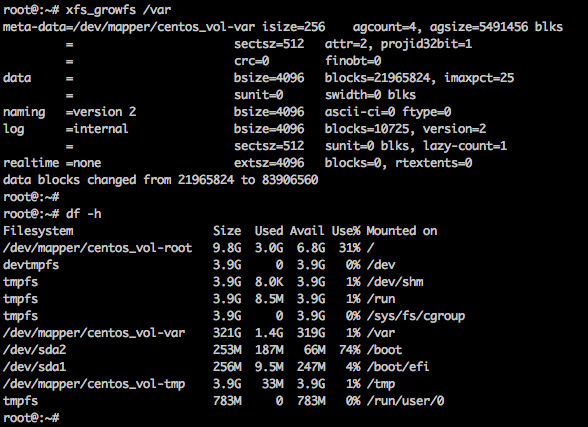

Checked it with lvdisplay afterward and things aren’t quite right, but I didn’t initially notice. It grew the volume size to 320 GB, but given my disk is 420 GB (minus some other file systems), I should have paid more attention and noticed that my number was not close to what I should have had. I went ahead and ran xfs_growfs to grow the file system online:

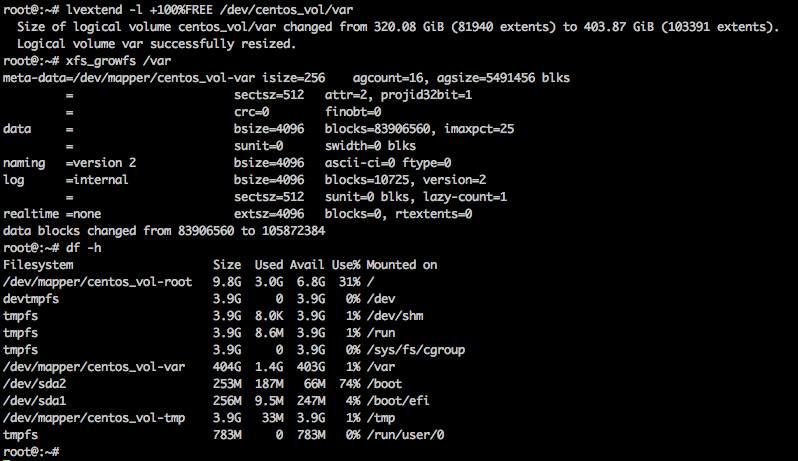

So at this point I realized I had not gotten the expected amount of space. Turns out my lvextend command was incorrect for what I intended. If you specify the size to grow WITHOUT the + character, it treats the size as the literal amount to grow to, so when I told it to grow to 100%FREE, I was telling it to grow to 100% of the size of the available free space in the volume group, and in this case, I had 320 GB of free space, so it grew my logical volume to 320 GB instead of growing it to 100% of the free space. Adding the + on the front would have resolved the issue. No big deal, I’ll just re-run the lvextend command correctly and grow the file system again:

There we go, that looks better, now I have 404 GB in /var on my 420 GB drive.